The Product That Already Worked

The Tesla Biohealer wasn’t just empty. By the time anyone bothered to look inside, it had already fulfilled its purpose. The promise had been accepted, the language trusted, the belief established. And once belief takes root, it doesn’t remain still. It spreads. It calcifies. And increasingly, it becomes something to be sold.

What appears at first glance to be a fringe curiosity is, in reality, a node in a much larger system — one in which distrust is cultivated, certainty is manufactured, and belief itself becomes a commodity. The Biohealer is not an anomaly but a product of an ecosystem where conspiracy, wellness culture, and digital amplification converge to turn conviction into profit and vulnerability into opportunity.

The Biohealer is not an anomaly but a product of an ecosystem where belief itself becomes a commodity.

An Economy, Not an Accident

Follow the money and the pattern becomes unmistakable. What looks like a scattershot collection of scams resolves into something more organized — an economy, not an accident. The Biohealer is merely one item in a marketplace where distrust is nurtured, belief is reinforced, and both are systematically monetized.

At every level, someone is paid. Influencers promote devices and protocols to their audiences, often through affiliate links or undisclosed sponsorships. Practitioners — some credentialed, many not — sell consultations, treatments, and access to “exclusive” knowledge. Companies manufacture products whose value lies not in function but in narrative. The result is not simply a scam, but an economy of belief, where revenue depends on maintaining conviction rather than demonstrating results.

What has changed is not the structure but the distribution. Earlier generations encountered advertising through mediums with at least some oversight — public broadcasters, regulated networks, standards bodies empowered to challenge false claims. Today, audiences consume content through decentralized platforms populated by independent creators operating with minimal accountability. Regulatory frameworks lag behind; enforcement is sporadic at best.

Trust as Inventory

Sponsorship becomes the bridge between belief and profit. As long as the cheque clears, the product appears — seamlessly integrated into content, framed as personal discovery rather than paid promotion. And when creators understand that their audiences are already primed — skeptical of institutions, receptive to alternative explanations, drawn to insider knowledge — the incentives align perfectly. The more credulous or ideologically aligned the audience, the more valuable it becomes. Trust becomes inventory.

This model is not new. It follows a well‑worn blueprint. In the 19th century, traveling salesmen hawked “electric cures” and miracle tonics, promising vitality through unseen forces. In the early 20th century, radioactive health products claimed to energize the body, often with devastating consequences. More recently, multi‑level marketing schemes repackaged wellness as entrepreneurship, turning supplements, essential oils, and detox regimens into both product and identity. Each iteration updates the language — electricity becomes quantum, radiation becomes frequency — but the underlying structure remains unchanged: promise transformation, avoid verification, and sell the possibility of both.

Faith, for a Price

Faith healing offers perhaps the clearest parallel. For decades, televangelists and charismatic preachers have built empires on the promise of invisible intervention—healing delivered not through medicine, but through belief itself, or at most through ampules of mystical healing water from sacred pools—or more likely, tap water direct from Bakersfield municipal water treatment plant. Congregants are asked to trust, to give, to demonstrate faith through financial commitment. The logic is circular and self-reinforcing: if the healing does not come, the failure lies not in the method, but in the believer. What matters is not the outcome, but the continuation of belief—and the revenue that sustains it.

The overlap with modern wellness grifts is not incidental. In both cases, authority is performed rather than demonstrated, skepticism is reframed as weakness, and financial contribution becomes a proxy for conviction. Whether it is a donation, a subscription, or a high‑priced device, the transaction converts belief into material support for the system that produced it.

In contemporary forms, this economy expands through fear. Anti‑vaccine detox kits, immune‑boosting supplements, and survival gear are marketed alongside narratives of institutional collapse and hidden danger. The message is consistent: the world is unsafe, the systems meant to protect you are compromised, and the solution is available — for a price. Outrage and distrust are not byproducts; they are fuel. The more uncertain the audience feels, the more valuable certainty becomes — and the easier it is to sell.

Selling Certainty Through Fear

What emerges is not opportunism but infrastructure. A network of creators, sellers, and amplifiers, each reinforcing the same beliefs while extracting value from them. The product may change — from Biohealer to supplement to protocol — but the mechanism remains constant. Belief is established, validated, and monetized at scale.

In this system, the question is no longer whether something works. It is whether it sells.

If belief is the product, social media is the distribution engine. Platforms like TikTok, Facebook, Instagram, and YouTube did not invent conspiratorial thinking, but they refined and accelerated it — transforming what was once slow, localized, and fringe into something immediate, global, and self‑reinforcing.

The Distribution Engine

At the centre of this system is a simple principle: engagement is value. Content is not prioritized for accuracy but for performance — likes, comments, shares, watch time. And few things perform better than emotion. Anger, fear, outrage, moral certainty — these are not side effects of viral content; they are its fuel. The more intense the reaction, the more the system learns to surface similar material.

This is not a flaw. It is the design.

Human attention is naturally drawn to threat. Psychologists call it negativity bias — the tendency to prioritize alarming or emotionally charged information over neutral content. Algorithms do not need to understand this concept to exploit it. They simply observe behavior. If users linger on videos about hidden dangers, suppressed cures, or institutional betrayal, the system responds by offering more of the same. Curiosity becomes pattern; pattern becomes feed.

Human attention is naturally drawn to threat. Psychologists call it negativity bias — the tendency to prioritize alarming or emotionally charged information over neutral content. Algorithms do not need to understand this concept to exploit it. They simply observe behavior.

The process is frictionless. A claim that once required effort to circulate — a pamphlet, a meeting, a chain of conversations — can now reach millions with a single tap. A video suggesting that energy devices can heal the body, or that mainstream medicine is withholding cures, can be shared, clipped, remixed, and redistributed across platforms in minutes. Each iteration strips away context and adds emotional clarity, making the message easier to consume and harder to question.

And it does not exist in isolation. Between conspiratorial monologues and emotionally charged testimonials, the feed fills in the gaps: a sponsored post for an offshore gambling site, a “get rich quick” crypto scheme, a promoted supplement promising sharper focus. Paid content blends seamlessly into organic belief. The same systems that amplify distrust also sell against it — monetizing attention wherever it lingers.

Engagement Over Truth

In this environment, the distinction between information and advertisement erodes. Everything is optimized for the same outcome: capture attention, hold it, convert it — into clicks, purchases, belief. Whether the product is a device, a financial scheme, or a narrative about hidden truth becomes secondary. What matters is performance.

Personalization tightens the loop. Engage with one piece of content — watch it to the end, pause on it, share it — and the system infers interest. The next recommendation is not random; it is calibrated. Slightly more extreme, slightly more certain, slightly more aligned with the trajectory already established. Over time, the feed does not merely reflect belief. It shapes it.

This is why conspiratorial content performs so well. It offers simple explanations for complex problems. It rewards the viewer with a sense of hidden knowledge — an invitation into a reality that feels deeper, more coherent, more intentional than the one presented by institutions. And it evokes strong emotional responses, which the system interprets as relevance. In an environment governed by engagement, the most emotionally efficient explanation often wins, regardless of whether it is true.

Communities of Certainty

From there, communities begin to form. Not accidentally, but predictably. People gravitate toward others who share the same interpretations, the same suspicions, the same language. Within these groups, belief becomes more than an opinion — it becomes a marker of belonging. Agreement is rewarded with visibility, affirmation, and status. Skepticism, by contrast, is treated as disruption. Those who question the dominant narrative may be dismissed as “sheep,” “shills,” or infiltrators — outsiders threatening the coherence of the group.

Belief becomes more than an opinion—it becomes a marker of belonging.

Over time, the boundaries harden. Information from outside the community is framed as biased or corrupted. Internal sources are elevated, repeated, and protected. Moderation — formal or informal — removes dissenting voices, leaving behind a more uniform, more confident, and more insulated worldview. What emerges is not just a community but a closed system — one where skepticism is reframed as betrayal, and belief becomes the cost of admission.

Inside that system, repetition does the rest.

The more often a claim is encountered, the easier it becomes to process. Psychologists call this cognitive fluency — the sense that something feels true because it feels familiar. Over time, the distinction between hearing something and verifying something begins to blur. A phrase repeated across videos, posts, and comments acquires weight not through evidence but through exposure. The brain, optimizing for efficiency, mistakes recognition for accuracy.

Psychologists call this cognitive fluency — the sense that something feels true because it feels familiar. Over time, the distinction between hearing something and verifying something begins to blur.

Online, this effect is amplified. The same idea appears across multiple formats — short videos, memes, testimonials, diagrams — each reinforcing the last. It may come from different accounts, different voices, different contexts, but the message remains consistent. Even those who initially question the claim can begin to feel its pull. Familiarity erodes resistance. Certainty becomes ambient.

These mechanisms do not operate in isolation. They reinforce one another.

Algorithms amplify emotionally charged content.

Communities form around that content.

Repetition within those communities makes the content feel true.

Heightened belief drives further engagement — feeding the algorithm, which amplifies it again.

The loop closes.

Trust becomes inventory.

In a feed where everything is trying to sell something, belief itself becomes just another product — packaged, targeted, and delivered between ads. In that environment, misinformation does not need to be convincing in any traditional sense. It only needs to be engaging, repeatable, and socially reinforced. Correction struggles to compete — not because it is unavailable, but because it is slower, more conditional, less emotionally satisfying. Truth requires effort. Belief is delivered.

And once belief is amplified and stabilized in this way, it becomes remarkably durable — resistant not just to evidence, but to the very idea that evidence should matter at all.

Once belief is entrenched, it requires something more durable than repetition. It needs to feel justified. It needs to look, at least superficially, as though it has been tested, measured, and confirmed.

This is where the illusion of evidence enters.

The Illusion of Evidence

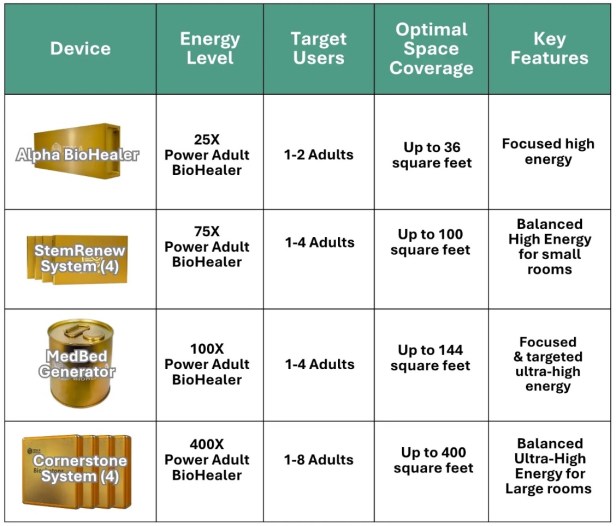

Tesla BioHealing’s own published materials offer a clear example. The goal is not to demonstrate efficacy in any rigorous sense, but to construct the appearance of scientific validation — enough structure, enough terminology, enough data points to signal legitimacy without ever meeting the standards that would make that legitimacy real. Quantity stands in for quality. Format stands in for substance.

One frequently cited paper, “Energetic homeostasis achieved through biophoton energy and accompanying medication treatment resulted in sustained levels of Thyroiditis‑Hashimoto’s, iron, vitamin D & vitamin B12,” is presented as evidence of therapeutic impact. In practice, it is little more than a single‑patient case report authored by a company employee. The claims are sweeping; the data is mundane. Vitamin D remains deficient. Hemoglobin remains anemic. B12 fluctuates within a normal range. The observed changes align with conventional treatment — iron infusions, supplementation — not with any novel “biophoton” effect.

What fills the gap is not evidence but instrumentation. The paper leans heavily on “Bio‑Well scans,” a technique derived from gas discharge visualization, used here to quantify “energy levels” in precise numerical terms. The numbers are formatted in tables, expressed in joules, given the visual language of measurement. What is missing is any explanation of what, physically, is being measured, how those measurements are validated, or whether they are reproducible. The structure mimics science. The substance does not.

A second study, “Biophoton Therapy for Chronic Pain: Clinical and Real‑World Breakthrough,” expands the scale but not the rigour. Published in a low‑profile journal, it claims improvements across conditions as varied as Parkinson’s disease and Lyme disease. The authors are, again, employees of the company producing the device. The conflict of interest is not incidental — it is foundational.

The Performance of Science

Here, the appearance of breadth replaces the absence of control. Testimonials, customer surveys, and self‑reported outcomes are treated as clinical evidence. Measurement tools include “meridian energy mapping,” thermographic colour shifts, and live blood microscopy — methods widely regarded as unvalidated or pseudoscientific. Details that would anchor the findings in reality — control groups, blinding, statistical methodology — are absent. Claims of “quantum‑level cellular” effects are made without mechanism, measurement, or replication.

Taken together, these studies do not establish efficacy. They establish plausibility through presentation. Tables, charts, technical phrasing, and the formal tone of a research paper create the impression that something rigorous has occurred. For readers without the tools to interrogate methodology, that impression is often enough.

This is the final step in the conversion of belief into certainty.

Where algorithms amplify and communities reinforce, “studies” legitimize. They provide something to point to, something to cite, something to circulate when skepticism arises. Not as proof in the scientific sense, but as reassurance in the psychological one. The presence of data — any data — becomes a substitute for evaluation. Numbers imply measurement. Measurement implies truth.

In this way, the system closes in on itself. Belief is no longer sustained by narrative alone, but by artifacts that resemble verification. The question is no longer whether the claim is true, but whether it has been documented. And once documentation, however flawed, enters the conversation, doubt becomes easier to dismiss.

What is being sold, ultimately, is not just a device or a claim, but the experience of having done the research — of having seen the evidence, of having verified it for oneself. The performance of inquiry replaces inquiry itself.

And in a system already primed to reward belief, that performance is often indistinguishable from proof.

A System That Doesn’t Need Truth

Taken together, the system is remarkably efficient. Belief is seeded through suggestion, amplified through algorithms, reinforced through community, monetized through influence, and finally legitimized through the appearance of evidence. At no point does it require proof — only performance. Each layer supports the next, until the distinction between what is true and what merely feels true becomes increasingly difficult to maintain. By the time the claim is questioned, it is no longer just an idea but an identity — protected, defended, and sustained by a structure designed to keep it intact.

Belief is seeded, amplified, reinforced, monetized, and finally legitimized—without ever requiring proof.

And yet, for all its sophistication, the system rests on something far less stable than it appears. It depends on a series of contradictions — between skepticism and credulity, independence and influence, rejection of authority and quiet submission to it. These tensions are not flaws in the system; they are what allow it to function.

Because once belief becomes identity, it no longer needs to be consistent. It only needs to be maintained.

Read PART 3: On The Hypocrisy of Belief here.

Comments are closed.